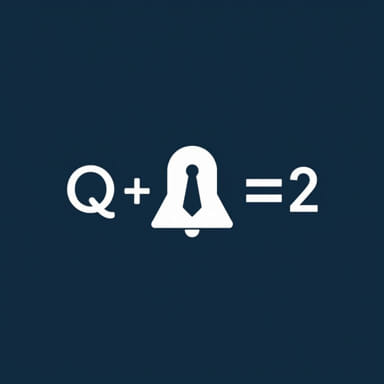

In the world of reinforcement learning, one of the most essential mathematical tools is the Bellman Equation, especially in its form that relates to the Q function. The Q function, or action-value function, is foundational in training intelligent agents that make decisions over time. Understanding how the Q function works with the Bellman Equation allows developers, researchers, and engineers to design smarter algorithms that can learn from their environments effectively. This concept is widely used in fields like robotics, game development, autonomous systems, and artificial intelligence research.

Introduction to the Q Function

What is the Q Function?

The Q function, also called the action-value function, is used to estimate the expected future rewards for an agent taking a specific action in a given state and then following a certain policy. In mathematical terms

Q(s, a) = Expected return when starting in state s, taking action a, and following policy π thereafter.

Here

- sstands for the current state of the environment.

- ais the action taken in that state.

- πis the policy that the agent follows after taking action a.

The Q function helps agents evaluate which actions will lead to the most reward over time, not just in the immediate next step.

Why Use the Q Function?

By calculating Q-values, an agent can decide which action to take in order to maximize its cumulative reward. This concept is central to Q-learning, a type of model-free reinforcement learning. The goal in Q-learning is to learn the optimal Q function, often represented asQ, which tells the agent the maximum expected reward achievable from any state-action pair.

The Bellman Equation

Understanding the Bellman Equation

The Bellman Equation is a recursive formula that describes the relationship between the value of a state and the values of subsequent states. It breaks down the value of a decision into two parts the immediate reward and the expected value of the future states. This idea helps in iteratively improving the policy.

Bellman Equation for the Value Function

The value function V(s) gives the expected return for being in state s and following a policy π. The Bellman Equation for V(s) is written as

V(s) = ∑aπ(a|s) ∑s'P(s'|s,a) [R(s,a,s') + γ V(s')]

But when we want to be more specific and focus on state-action pairs, we use the Bellman Equation for the Q function.

Bellman Equation for the Q Function

Basic Form of the Q Function Bellman Equation

The Bellman Equation for Q(s, a) looks like this

Q(s, a) = ∑s'P(s'|s,a) [R(s,a,s') + γ maxa'Q(s', a')]

Let’s break this down

- P(s’|s,a)The probability of transitioning to state s’ from state s after taking action a.

- R(s,a,s’)The reward received after taking action a in state s and transitioning to state s’.

- γ (gamma)The discount factor, between 0 and 1, which determines how much future rewards are worth compared to immediate rewards.

- maxa’Q(s’, a’)The maximum expected future reward attainable from the next state s’.

Key Insights

This equation highlights a very important point the value of a current action depends not only on the immediate reward but also on the optimal future rewards that can be obtained from the resulting state. This recursive definition is what allows reinforcement learning agents to update their knowledge over time.

Using Q-Learning with the Bellman Equation

Q-Learning Algorithm

In Q-learning, the agent doesn’t need to know the model of the environment (i.e., the transition probabilities P(s’|s,a)). Instead, it learns Q-values through experience by interacting with the environment. The update rule for Q-learning is based on the Bellman Equation

Q(s, a) ← Q(s, a) + α [r + γ maxa'Q(s', a') - Q(s, a)]

Where

- α (alpha)Learning rate (how quickly the agent updates its Q-values).

- rThe immediate reward received.

With each interaction, the agent updates its Q-values based on the reward received and the estimated future rewards. Over time, these updates converge toward the optimal Q-function.

Benefits of the Q Function Bellman Equation

The Q function Bellman Equation makes it possible to learn from delayed rewards, reason about long-term strategies, and adjust behavior dynamically. It plays a critical role in training agents for real-time decision-making, such as in games, navigation, or finance.

Applications of Q Function and Bellman Equation

Robotics

Robots use reinforcement learning based on Q-values to learn how to walk, pick up objects, or navigate environments without being explicitly programmed for each movement.

Game AI

Game agents use Q-learning to adapt their strategies in complex games like chess, Go, or video games, improving their gameplay over time.

Autonomous Vehicles

Self-driving cars use similar algorithms to make decisions about when to accelerate, brake, or change lanes based on the expected rewards of various actions.

Finance and Trading

Q-function-based algorithms can help financial systems evaluate the best investment actions based on expected future returns, balancing risk and reward.

Challenges and Limitations

Large State Spaces

In environments with a huge number of possible states and actions, storing Q-values for each pair becomes impractical. This leads to the use of function approximators like deep neural networks hence the development of Deep Q Networks (DQN).

Exploration vs. Exploitation

Agents need to balance exploring new actions to discover potentially better rewards versus exploiting actions that already seem optimal. Poor balance can lead to suboptimal policies or slow learning.

Delayed Rewards

Sometimes the most valuable rewards are far in the future, making it harder for the agent to link them back to current actions. The discount factor γ helps, but tuning it correctly is crucial.

The Q function Bellman Equation is at the core of reinforcement learning. It provides a structured way to think about how current actions affect future rewards and how agents can learn optimal behaviors over time. By recursively updating values based on experience, agents become increasingly capable of navigating complex environments. Whether in gaming, robotics, or autonomous systems, mastering this equation unlocks the true potential of intelligent decision-making systems. Anyone interested in machine learning, AI, or algorithm design should invest time in understanding how the Q function and Bellman Equation work together to shape the future of artificial intelligence.